Construction sites, by their very nature, are packed with an ever-changing array of potential hazards. Even with rigorous safety protocols in place, it can be all too easy to wind up too close to operational plant or neglect to wear the required personal protective equipment (PPE).

Contractor Winvic believes that artificial intelligence (AI) holds the key to making construction sites safer by giving people reliable warnings before they put themselves in danger.

The company has teamed up with the Big Data Enterprise & Artificial Intelligence Lab (Big-DEAL) at the University of the West of England (UWE) and video technology specialist One Big Circle (OBC) to develop a system that will understand where the current hazards are and send personal alerts via smartphones when someone is about to go somewhere risky.

This article was first published in the Dec/Jan 2021 issue of The Construction Index magazine. Sign up online.

UWE and OBC are sharing a £600,000 Innovate UK ‘Smart Grant’ with Winvic on the project, which will run for two years and will see the three organisations bring together real-time images and machine learning technologies on two Winvic sites running consecutively.

Winvic technical director Tim Reeve describes the concept as being a 24/7 on-site ‘eye in the sky’ health & safety alert system. “We were discussing improving site safety and how we could go about that using technology,” he says. “We came up with this idea of preventing people getting anywhere near a potential accident before it happens.”

The company began site work at the end of October on the first of the two projects where the system will be developed and put through its paces. Transport and logistics company DSV has engaged Winvic to design, construct and partially fit out its new industrial facility at Mercia Park in north-west Leicestershire.

“The site is one that lends itself perfectly to what we are trying to do,” says Reeve, who is Winvic’s lead on the AI project, dubbed ‘Computer-Vision-Smart’. He says that Mercia Park is a relatively straightforward project, although it comprises multiple buildings and exterior works.

Largest of the buildings is a 358,000 sq ft steel-framed warehouse containing three mezzanine floors and two single-storey hub offices. There will also be a 112,000 sq ft cross-dock terminal that contains a 7,050 sq ft single-storey hub office and a 35,660 sq ft three-storey office building. The project’s yard and car park will provide open spaces for the cameras to look across in the early stages while all work is taking place outside.

The cameras placed around the site can now start capturing data so that team members on the AI side can begin their analysis and get the predictive machine learning under way, says Reeve. The external and – later into the programme – internal site cameras will capture video images continuously. The AI will learn to identify any hazards, ranging from overhead works or the movement of heavy machinery to more general issues like people lacking the correct PPE. The team believes that the machine learning models will be able to make an increasing number of intelligent predictions as the project progresses.

Reeve thinks the concept of the Computer-Vision-Smart project is “fantastic” but acknowledges that a very challenging task lies ahead. “We are quite confident that we’ll get something meaningful out of the two years – an end product that we can take forward,” he says.

The Mercia Park project is being viewed primarily as an exercise in data capture and a trial of how to set up the site alerts. The camera systems will feed into large-screen TVs that will be installed in site cabins. The display will also be populated with scrolling outputs of the alerts that the AI is starting to report, enabling the site team to check on what is being detected. “At the same time, we are developing an app to work alongside it,” Reeve says. “We don’t think we’ll get the app into people’s hands and into their pockets probably until our second project kicks off, which we think will be about August next year.”

The AI may be ready for a tougher role once work at Mercia Park is completed next summer. “We might choose a slightly more complex site for the second one,” says Reeve. This will depend on progress, including how well the AI has tweaked itself over the initial period and how effective it is at recognising problems from the images it is given.

Each of the three organisations has a part to play in the system’s development, with obvious input from Winvic in provision of the demonstration projects plus knowledge of health & safety on site. “There are also historical data sets so we know the kind of trends that happen,” says Reeve. OBC has computer analysts and technical staff, while UWE brings in the academic and research side. Together they believe they can align development to the needs of the construction industry.

Reeve says the use of intelligent digital technologies in construction to deliver projects more rapidly, cost-effectively and safely has long been an aspiration of Winvic’s. “With the solution that has been conceived will come better opportunities than ever before to reach our zero-harm aim.”

There are perceived gaps in existing approaches across the construction industry. Safety managers and operatives often depend on self-reporting or warnings from co-workers, which can simply come too late to avoid an incident. Existing camera-based systems generally focus on aspects such as site security, dispute avoidance and project progress, rather than safety. Alert systems tend to be based on proximity to a particular piece of plant, with the aim of letting the operator know who or what is near their machine – essentially extending their line of sight.

“We are coming at it from a slightly different angle,” says Reeve. “We want to give the people on the ground the line of sight. The immediate concern is to make that individual stop and think. If we can prevent people from going into high-risk or medium-risk areas then we can prevent accidents.”

The dangers of walking very close to actual operations might appear to be obvious, but in reality there is also a wider area of influence. For instance, a piece of plant might suddenly start moving in a different direction. “People think they are a long way from it but they may still be in proximity to a potential accident,” says Reeve.

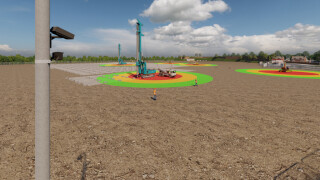

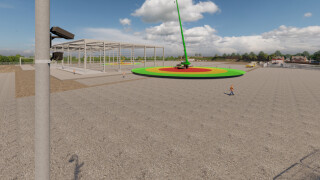

Thanks to a geographic information system (GIS), the AI will be aware of the exact locations of personnel and plant and will learn to identify safe zones, essentially drawing a virtual radius around potential hazards.

When someone enters the hazard zone, the individual – and, when appropriate, other members of the site team – will receive a personal alert via a smartphone app or a wearable device. The warning will become constant if they enter the zone of full alert. There is also scope for escalation if there is a site-wide alert of immediate concern.

Project managers are really the end users of the system being developed and their input has been invaluable. “The original concept ideas are being fine-tuned by the feedback we get from site,” says Reeve. “We’ve had a very good reaction and our staff have engaged with it as well. It’s caught the imagination of everybody involved, which is fantastic.”

Managers will also be able to review the alerts and relevant video segments from a laptop. This should help them spot any issues that are catching multiple people out – or any people who consistently flout site rules, such as regularly taking shortcuts to save a few seconds when heading for their lunch.

There will be weekly or fortnightly project meetings, where the appropriate people from all three organisations will plan the next tranche of development. “What we are going to do initially is give the AI some help,” says Reeve. It will be told, for instance, what a crane looks like and shown the extent of the surrounding zones that could be dangerous to enter. After that, the technology will be programmed to pick up movements of the kit on the site, as well as deliveries and site vehicles. “It will start to predict its own sets of health & safety rings around them,” he says.

He likens it to watching a child learn to walk – giving it a little guidance to show how it’s done and gently nudging it towards learning and doing things itself.

“The idea is that the AI will build up a library of images and predictions,” says Reeve. It will learn about the project, identifying changing areas of interest as the build progresses. “What we are going to try and do is link the build into the AI, so that it starts to understand the sequence and the direction of work so that it can predict high-risk areas.”

The AI will also be on the look-out for movement – for example learning the difference between a piece of cladding being installed and a fixed steel column that is not about to go anywhere.

The team is exploring whether other ‘wearables’ might be needed in addition to phones. Smart watches, wristbands and even ‘old-fashioned pagers’ have been looked at. “We are trying to make it very easy for people to connect with,” says Reeve.

Job functions will have to be taken into account. Details that will be addressed include ensuring that people at the ‘epicentre’ of a risk – excavator operators for instance – aren’t bombarded with constant alerts. The system will need to learn the difference between them and somebody straying into the hazard area.

There will still be a role for interventions that are social, rather than technical, in nature. For example, personal input will be needed if the project manager starts to see a particular person getting a high number of alerts every day. Privacy issues mean that project won’t be using facial recognition but it will still be able to identify each user of the system.

The social side will also involve establishing a mind-set whereby wearing PPE becomes second-nature. Historically, this would come from members of the site team speaking to anyone without the right kit – but the culprit might then remove their hard hat when they are out of sight. In contrast, the camera will always be on the look-out and so can assist with monitoring and enforcement. “We definitely want to target the correct wearing of PPE, such as hard hats and high-vis vests too,” says Reeve.

The Computer-Vision-Smart project was originally submitted to Innovate UK last year, prior to the outbreak of Covid-19; the conceptual objectives for the scheme would therefore need to change slightly should the team look to consider the incorporation of social distancing. “We may look to see if this could be an ‘add-on’ to the features already planned for the system,” says Reeve. “We already have clear and responsible guidelines on social distancing as part of our site operating procedures. There is of course already the NHS Track & Trace app.”

The AI’s role ties in with another safety-focused initiative that Winvic has launched this year. The ‘Doing It Right’ programme is aimed at further improvements in safe behaviour, targeting ‘walk-bys’ – incidents where people turn a blind eye to something potentially hazardous. “We’ve got a ‘don’t walk by’ slogan,” says Reeve. “If you see something, don’t just walk by – stop, say something, raise the alert. We’re hoping that using the AI will assist us in monitoring those activities that sometimes don’t get seen.”

UWE Bristol and Winvic are also partners on another AI-enabled project, called Conversational BIM. This involves the development of a voice-activated headset connected to a BIM model. It is being designed to allow users to retrieve any and all project design and construction information with a simple vocal request.

Reeve believes that the new Computer-Vision-Smart project’s achievements over the next two years will generate a significant leap forward not just for Winvic, but potentially for safety across the whole of the construction industry. “Ultimately, one of the objectives is to have an end product that we could sell to the marketplace,” he says.

As well as customising the system for Winvic’s purposes, the team will also be looking at what else needs to be done to make it suitable for others. This will involve bringing in other contractors to get their feedback. “We’ve had a lot of interest from the rail side as well,” he adds.

This article was first published in the Dec/Jan 2021 issue of The Construction Index magazine. Sign up online.

Got a story? Email news@theconstructionindex.co.uk

.gif)